Blog

Div0 x Range Village: My First Hands-On Cloud Pentesting Workshop

2026-02-28

First, a sincere thank you to Div0 Singapore and Range Village for organizing this workshop and making the lab accessible.

I have attended cybersecurity workshops and conferences before, like DevSecCon in 2018 and a couple of Black Hat events, but this workshop felt different for me. This was the first time I joined a session that was clearly on the red team side and worked through a full cloud attack path in a guided, practical way.

If you are new to my background, you can read my origin story for context.

One of the first things the instructor mentioned was:

“Everything in the cloud is a resource.”

That framing stayed with me throughout the lab.

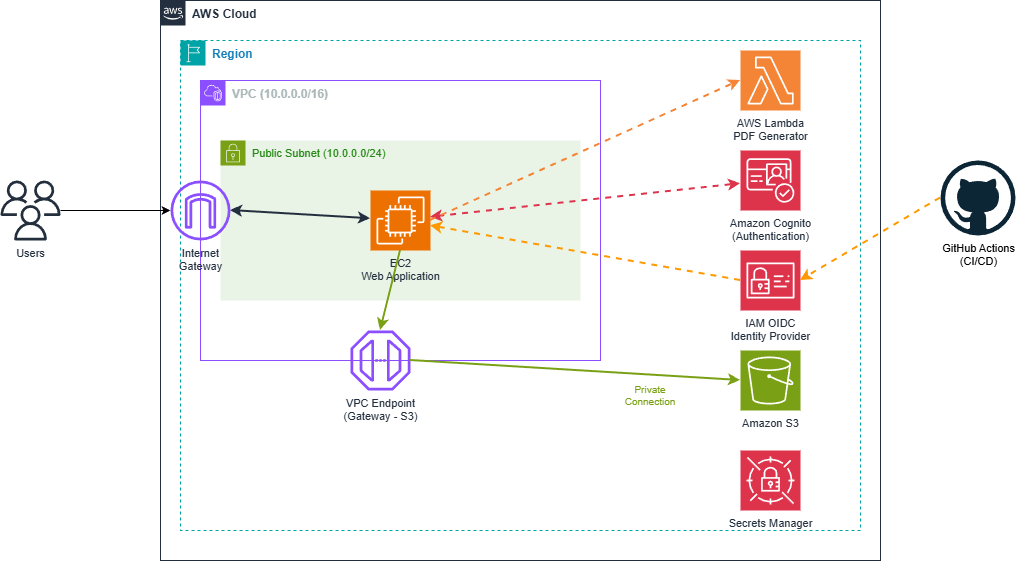

It changed my mindset from “test the web app” to “map the resource graph.” In cloud pentesting, the server, IAM role, trust policy, bucket policy, Lambda function, user pool, and even CI/CD identity federation are all real resources with permissions and relationships.

Once you think this way, you stop looking for one big vulnerability and start tracing chains across resources. The question becomes: “What can this resource talk to, assume, read, or modify next?” That shift made the lab much clearer for me.

Workshop context

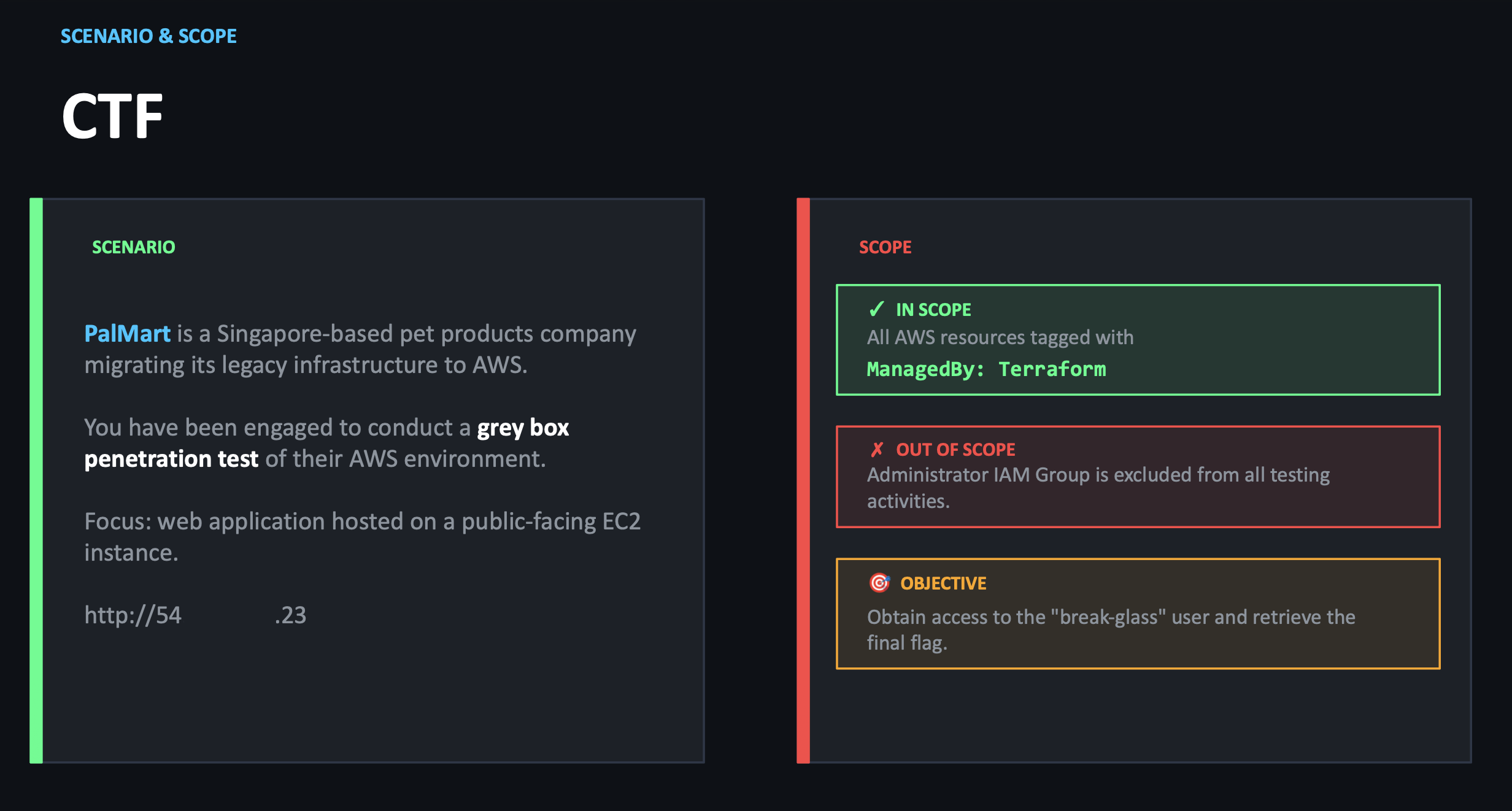

The lab scenario was a grey-box AWS assessment for a fictional company called Palmart. We were given an architecture diagram and a scoped objective: chain findings and misconfigurations until we could access a protected `break-glass` path and retrieve the final secret.

The lab scenario was a grey-box AWS assessment for a fictional company called Palmart. We were given an architecture diagram and a scoped objective: chain findings and misconfigurations until we could access a protected `break-glass` path and retrieve the final secret.

What made the lab strong was the sequence. Each issue on its own looked small. Combined, they became a full compromise path.

What made the lab strong was the sequence. Each issue on its own looked small. Combined, they became a full compromise path.

The lab chain (high level)

At a high level, this was the flow we learned:

- Public cloud storage exposure allowed initial discovery of sensitive application configuration.

- Leaked identity config enabled abuse of weak user registration controls.

- A server-side request flow could be abused to reach instance metadata and temporary credentials.

- Those credentials allowed service enumeration, including serverless function access.

- Hardcoded credentials in code expanded access to a wider IAM view.

- IAM visibility exposed a misconfigured GitHub OIDC trust relationship.

- OIDC role abuse granted access to remote management capabilities on EC2.

- Internal position plus policy conditions enabled access to data behind an S3 VPC endpoint control.

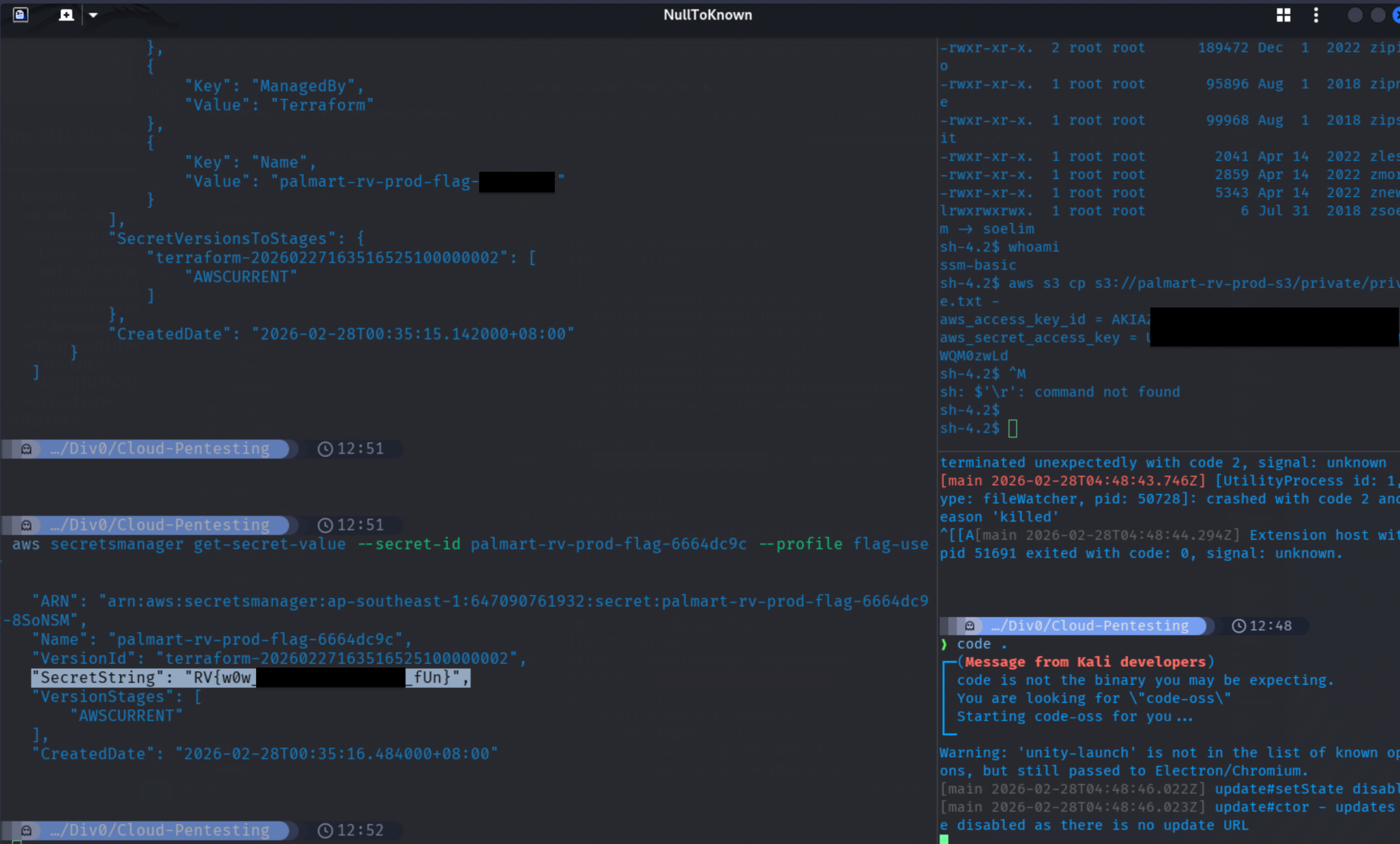

- Retrieved credentials enabled break-glass abuse and final secret access.

I am intentionally keeping this write-up sanitized and focused on learning outcomes, not step-by-step abuse instructions.

What I learned

1) Cloud breaches are often chain problems, not single bugs

The biggest lesson was attack chaining. A public bucket listing, an over-permissive trust policy, and weak IAM boundaries can look unrelated during review. In practice, they connect.

2) Identity is the core cloud attack surface

Most meaningful pivots in this lab were identity-driven:

- Cognito attribute abuse

- IMDS credential theft impact

- Overly broad IAM

List*andGet* - GitHub OIDC trust wildcards

- Break-glass misuse

For me, this reinforced that cloud pentesting and cloud defense both start with IAM design quality.

3) CI/CD trust boundaries are production boundaries

The OIDC section was one of the strongest examples in the workshop. If trust policies are too broad, your pipeline identity can become an attacker’s entry point.

I now treat OIDC trust policy review as part of core appsec and platform security, not just “DevOps config.”

4) Network controls help, but policy logic still wins

Even with VPC endpoint constraints, access decisions still depended on identity and policy conditions. That is a useful reminder: network controls reduce risk, but bad IAM design can still open the door.

Defensive checklist I am taking forward

- Enforce least privilege for IAM users and roles, especially around enumeration and credential-management actions.

- Lock down OIDC trust policies to exact org/repo/branch claims.

- Remove hardcoded secrets from code and use managed secret storage.

- Harden metadata exposure paths and validate outbound URL features to reduce SSRF impact.

- Add high-priority alerting for break-glass activity and access key creation events.

- Test cloud controls as chains during reviews, not as isolated checks.

Personal takeaway

This workshop gave me a practical bridge from my platform/DevOps background into offensive cloud thinking. It was not about “hacking tricks.” It was about understanding how cloud systems fail when identity, trust, and policy are designed without adversarial thinking.

That shift in perspective is exactly what I wanted from this session.

Also, this was my first time capturing a flag in a physical, live workshop CTF setting. I have been grinding HTB boxes for a while, but doing it live in a room with other builders and hackers had a different kind of thrill.

I will keep documenting this path in public through my \\\[projects\\\](/projects) and blog posts as I go deeper into red teaming fundamentals with a defense-first mindset.

I will keep documenting this path in public through my \\\[projects\\\](/projects) and blog posts as I go deeper into red teaming fundamentals with a defense-first mindset.

Thanks again!

- Div0 meetup page: https://www.meetup.com/div0_sg/events/313075702/?eventOrigin=group_upcoming_events

- Range Village: https://www.rangevillage.org/